The Challenge¶

The widespread use of remote camera traps offers unprecedented insights into the observation of wild animals, their behavior, and abundance. Yet, researchers and practitioners still struggle with processing the huge amounts of visual information that quickly accumulates during camera trap field surveys.

Camera-animal observation distance is a crucial parameter for the estimation of animal abundance or establishment of social network structure. The depth estimation models and level of precision achieved by the winning teams offer massive time saving in the range of 40-60% for the processing of camera trap footage. These will help us to develop effective monitoring approaches for surveying hundreds of wildlife species.

Hjalmar Kühl, Senior Scientist at the iDiv (German Centre for Integrative Biodiversity Research Halle-Jena-Leipzig)

Motivation¶

Healthy natural ecosystems have wide-ranging benefits from public health to the economy to agriculture. In order to protect the Earth's natural resources, conservationists need to be able to monitor species population sizes and population change. Camera traps are widely used in conservation research to capture images and videos of wildlife without human interference. Using statistical models for distance sampling, the frequency of animal sightings can be combined with the distance of each animal from the camera to estimate a species' full population size.

However, getting distances from camera trap footage currently entails an extremely manual, time-intensive process. It takes a researcher more than 10 minutes on average to label every 1 minute of video! This creates a bottleneck for conservationists working to understand and protect wild animals and their ecosystems.

The goal of the Deep Chimpact Challenge was to automate distance estimation for wild animals in camera trap videos. Better distance estimation models can rapidly accelerate the availability of critical information for wildlife monitoring and conservation.

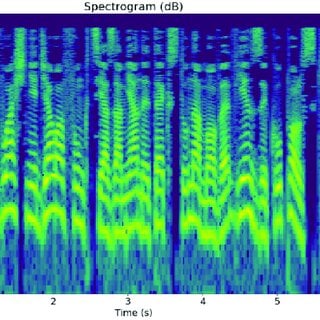

Left: An image of a chimpanzee from a camera trap. Right: The depth mask generated for the image by the monodepth2 model available on GitHub.

Results¶

Over the course of the competition, participants tested over 900 solutions and were able to significantly advance existing methods of depth estimation in wildlife contexts. To kick off the competition, DrivenData released a benchmark solution developed by MathWorks that combined optical flow with a convolutional neural network.

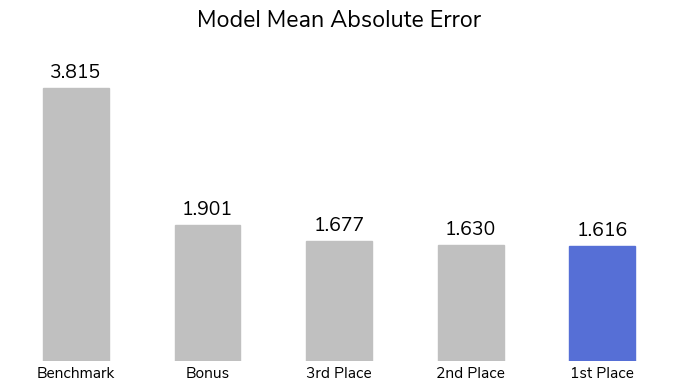

One of the most recent studies applying machine learning to depth estimation, Overcoming the Distance Estimation Bottleneck in Estimating Animal Abundance with Camera Traps (2021), used monocular depth estimation to acheive a mean absolute error of 1.85 m. Mean absolute error (MAE) measures the magnitude of differences between estimated values and ground truth values. The method relied on a series of reference videos that required field researchers to travel to and visually document distances at each camera trap location.

Competitors were not provided with reference videos to more fully automate the time-intensive process. But our winners were not deterred! The top-scoring solutions improved on the state-of-the-art method, with the top model achieving an MAE of 1.62. These innovative models could help make depth estimation more accessible to conservationists around the world, even those without the time or resources to create reference videos.

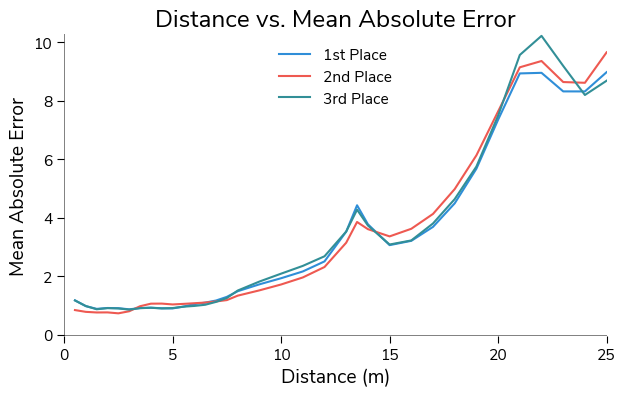

Moreover, the winning approaches were able to predict distance much more accurately in general when the animal was closer to the camera trap. This is important for conservation applications since accurate depth for closer animals matters more than for those farther away when using distance sampling to estimate population sizes. For this dataset, closer animals are also much more common than animals farther away; only about 8% of the images show an animal 15 meters away or farther.

To achieve these results, the winners brought a wide variety of creative strategies to this task! Below are a few of the common takeaways across solutions.

-

Ensembling: All of the winning solutions used some form of ensembling. Many trained multiple different types of deep learning model backbones. Some trained the same model backbone multiple times on different subsets, or "folds", of the data. The Bonus winners used MATLAB's Regression Learner toolbox to combine the results of two random partitions using a boosted tree. Ensembling, particularly with folds, can help avoid overfitting a model on the training data.

-

Image augmentation: All of the winning solutions used significant augmentation on the training images. These included flips, rotations, blurs, distortions, cutouts, color augmentations, and more! Adding augmentation can prepare a model for a wider variety of scenarios, increasing generalizability.

-

Stacked image sequences: Both the second and third place winners used sequences of stacked images, rather than individual images, to predict distance. Second place-winner Kirill Brodt stacked 5 frames into an array for each prediction. Third place-winner Igor Ivanov used sequences of 7 or 9 frames with an interval of either 1 or 2 seconds between frames. Sequences of images are able to capture motion much better than a single, static image.

Let's get to know our winners and how they became wildlife video experts! You can also dive into their open source solutions in the competition winners repo on Github.

Meet the winners¶

| Prize | Name |

|---|---|

| 1st place | Bishmoy Paul, Md Awsafur Rahman, Najibul Haque Sarker, and Zaber Ibn Abdul Hakim |

| 2nd place | Kirill Brodt |

| 3rd place | Igor Ivanov |

| MATLAB Bonus | Azin Al Kajbaf and Kaveh Faraji |

Bishmoy Paul, Md. Awsafur Rahman, Najibul Haque Sarker, and Zaber Ibn Abdul Hakim¶

|

|

|

|

Place: 1st Place

Prize: $5,000

Hometown: Dhaka and Chittagong, Bangladesh

Username: Team RTX 4090 - najib_haq, awsaf49, Bishmoy, zaber666

Background

Team members are all 3rd-year undergraduate students in the Department of Electrical & Electronic Engineering at Bangladesh University of Engineering and Technology, located in Chittagong, Bangladesh.

What motivated you to compete in this challenge?

We are generally interested in participating in out of the box challenges, specifically if they have a positive impact on real life scenarios. Depth Estimation is normally used in autonomous driving situations. Application of this approach in measuring distance from camera traps and animals to monitor wildlife populations sounded very interesting in the first place. Besides, the probable solution methods overlapped with our area of expertise. So, we thought this might be a great opportunity for us to apply our knowledge and learn new applications to eventually be able to help in the great cause of conservation of nature.

Summary of approach

Our entire procedure can be summarized in the below image:

-

Data processing: Our main strategy was to use all data training. But first we used StratifiedGroupKFold to split the dataset in 5 folds and measured all performances using this 5 fold cross validation score. We got to know the best epoch for our training configurations; namely the epoch when our model typically finds the global minima in optimization. This ensures the model doesn’t become overfit or underfit. We saw that the higher dimension the training images are, the more the model seemed to generalize better. Through our experiments, we saw that a solution incorporating multiple image sizes tended to have the best scores.

-

Data augmentations: We used various data augmentations and saw the effect these had on training through the use of gradcam. The selected final augmentations are affine transformations, distortion, cutout, color augmentations, and togray.

-

Loss function: We used MAE and Huber Loss alternatively with different models. Using MAE comes with some downsides, such as slow convergence and oscillation of the metric if a larger learning rate is used. Huber loss solves this issue as described.

-

Ensemble: We trained 5 NFNetl2, 2 Resnest200, 2 EfficientNetB7 and 2 EfficientNetV2M models with varying image sizes as training input. The soft labels of the models were ensembled together with varying weights designed to get the optimal performance of each model.

Kirill Brodt¶

Place: 2nd Place

Prize: $2,000

Hometown: Almaty, Kazakhstan

Username: kbrodt

Background

I'm doing research at the University of Montréal in Computer Graphics. I got my Master in Mathematics at the Novosibirsk State University, Russia. Lately, I fell in love with machine learning, so I was enrolled in Yandex School of Data Analysis and Computer Science Center. This led me to develop and teach the first open Deep Learning course in Russian. Machine Learning is my passion and I often take part in competitions.

Summary of approach

I've trained new efficientnetv2 CNN models with MAE loss. To capture the motion I've stacked a sequence of 5 frames channel-wise in one array. The frames are downsampled to 270x480 size. The model's output is a distance. I've trained the model with heavy augmentations for 80 epochs with the AdamW optimizer and CosineAnnealingLR scheduler. To reduce the predictions' variance I've ensembled models from different folds using the simple average. Then I've added these predictions as pseudo labels to the train set and finally retrained models.

What are some other things you tried that didn’t necessarily make it into the final workflow?

Data augmentation using linear interpolation of distances between two frames harms the performance.

Igor Ivanov¶

Place: 3rd Place

Prize: $1,000

Hometown: Dnipro, Ukraine

Username: vecxoz

Background

I'm a deep learning engineer from Dnipro, Ukraine. I specialize in CV and NLP and work at a small local startup.

What motivated you to compete in this challenge?

Environment conservation is a very important effort. I'm happy to take part and contribute. Additional inspiration came from the cute chimpanzees and other animals featured in the videos.

Summary of approach

My solution is an ensemble of 12 models, each of which is a self-ensemble of 5 folds. All models are based on CNN-LSTM architecture with EfficientNet-B0 backbone. Input data is a sequence of 7 or 9 video frames taken with an interval (time-step) of 1 or 2 seconds. Each sequence has an equal number of frames taken before and after the target frame. Each frame is a 3-channel image with a resolution 512 x 512. Optimization was performed using an Adam optimizer and MAE loss.

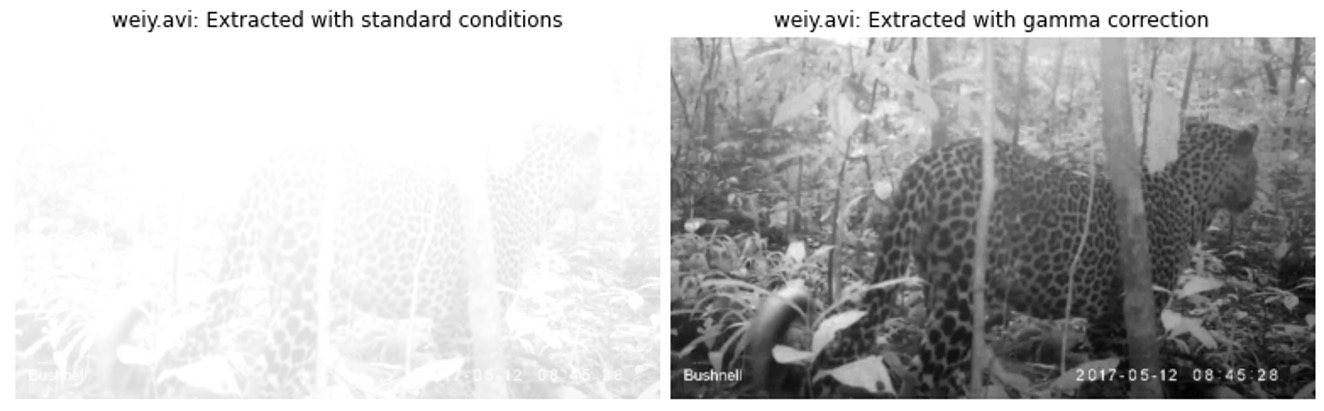

Significant improvements were obtained using different types of augmentation, especially affine transformations (flips, rotations) and Gaussian blur. Another source of improvement is gamma correction. Around 10% of all videos are overexposed, and gamma correction allows us to extract much more information from such examples.

Azin Al Kajbaf and Kaveh Faraji¶

|

|

Place: MATLAB Bonus prize

MathWorks, makers of MATLAB and Simulink software, sponsored this challenge and the bonus award for the top MATLAB user. They also supported participants by providing complimentary software licenses and learning resources. Congrats to Team K_A as the top finalist using MATLAB!

Prize: $2,000

Usernames: Team K_A - kaveh9877, AZK90

Background: Azin Al Kajbaf

I am a Ph.D. candidate in the Department of Civil & Environmental Engineering at the University of Maryland, and my area of research/academic focus is “Disaster Resilience.” My research involves the application of machine learning and statistical methods in coastal and climate hazard assessment. My research goal is to leverage data science and engineering to enhance the prediction and assessment of natural hazards in support of more robust risk analysis and decision-making.

Background: Kaveh Faraji

I am a Ph.D. candidate at the University of Maryland, and I work in the area of Disaster Resilience. My main research is focused on risk assessment of natural hazards such as flood and storm surge. I am employing geospatial analysis and machine learning approaches in my research.

Summary of approach

Our best private score with the MATLAB-only solution was MAE: 1.948.

- Step 1: We imported a publicly available pretrained 3D network (ResNet 3D 18) using MATLAB "importONNXLayers" function.

- Step 2: We used MATLAB for preprocessing videos, and we cut the frames out of videos at the timestamps that the distance needed to be estimated.

- Step 3: We used MATLAB "imageDatastore" and "Image Processing Toolbox" (used to augment the frames of videos) to prepare the data for Deep Learning Toolbox.

- Step 4: We trained the network using MATLAB Deep Learning Toolbox. We only considered two random partitions (80% training/ 20% validation) due to time limitation.

- Step 5: We post-processed the predictions of the trained networks (results of the two random partitions) from the previous step and combined them using MATLAB Regression Learner toolbox (Boosted Trees). The final submission file is the output of this step.

If you were to continue working on this problem for the next year, what methods or techniques might you try in order to build on your work so far?

In addition to a MATLAB-only solution, we generated a PyTorch+MATLAB solution with a final MAE of 1.859. This solution is based on combining the outputs of MATLAB and PyTorch using MATLAB Regression Learner toolbox (Boosted Trees). For PyTorch, we used a ResNet (2+1)D pretrained network and modified it to work with four channels (RGB+ depth) instead of three. To obtain depth, we used "Monodepth2" that is mentioned on the competition website.

If we were to continue this project, we would have added more channels that reflect depth. These depth channel inputs can potentially be created with Monodepth2 that are trained using different datasets. Also, we could use other models for computing depth, such as MiDaS.

Thanks to all the participants and to our winners! Special thanks to MathWorks for enabling this fascinating challenge, and to the Max Planck Institute for Evolutionary Anthropology (MPI-EVA) and the Wild Chimpanzee Foundation (WCF) for providing the data to make it possible!